How To Import Data From Sql Into Hdfs Using Shell Script

HDFS Commands

In my previous blogs, I take already discussed what is HDFS, its features, and architecture. The start step towards the journey to Big Data training is executing HDFS commands & exploring how HDFS works. In this blog, I volition talk most the HDFS commands using which you tin can access the Hadoop File System.

So, let me tell you the important HDFS commands and their working which are used most frequently when working with Hadoop File System.

-

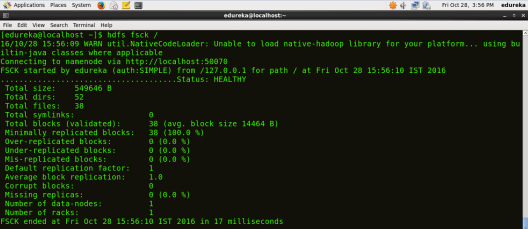

fsck

HDFS Control to check the health of the Hadoop file organisation.

Command: hdfs fsck /

-

ls

HDFS Command to display the list of Files and Directories in HDFS.

Command: hdfs dfs –ls /

-

mkdir

HDFS Command to create the directory in HDFS.

Usage: hdfs dfs –mkdir /directory_name

Control: hdfs dfs –mkdir /new_edureka

Note: Here we are trying to create a directory named "new_edureka" in HDFS.

-

touchz

HDFS Command to create a file in HDFS with file size 0 bytes.

Usage: hdfs dfs –touchz /directory/filename

Command: hdfs dfs –touchz /new_edureka/sample

Notation: Here we are trying to create a file named "sample" in the directory "new_edureka" of hdfs with file size 0 bytes.

-

du

HDFS Control to check the file size.

Usage: hdfs dfs –du –s /directory/filename

Command: hdfs dfs –du –due south /new_edureka/sample

-

cat

HDFS Control that reads a file on HDFS and prints the content of that file to the standard output.

Usage: hdfs dfs –cat /path/to/file_in_hdfs

Control: hdfs dfs –cat /new_edureka/exam

-

text

HDFS Control that takes a source file and outputs the file in text format.

Usage: hdfs dfs –text /directory/filename

Command: hdfs dfs –text /new_edureka/exam

-

copyFromLocal

HDFS Control to copy the file from a Local file system to HDFS.

Usage: hdfs dfs -copyFromLocal <localsrc> <hdfs destination>

Command: hdfs dfs –copyFromLocal /home/edureka/exam /new_edureka

Note: Here the exam is the file present in the local directory /home/edureka and subsequently the control gets executed the test file will be copied in /new_edureka directory of HDFS.

-

copyToLocal

HDFS Command to copy the file from HDFS to Local File Organization.

Usage: hdfs dfs -copyToLocal <hdfs source> <localdst>

Control: hdfs dfs –copyToLocal /new_edureka/test /dwelling/edureka

Note: Here test is a file present in the new_edureka directory of HDFS and later on the command gets executed the examination file volition be copied to local directory /home/edureka

-

put

HDFS Command to copy unmarried source or multiple sources from local file arrangement to the destination file system.

Usage: hdfs dfs -put <localsrc> <destination>

Command: hdfs dfs –put /home/edureka/test /user

Note: The command copyFromLocal is similar to put command, except that the source is restricted to a local file reference.

-

go

HDFS Command to copy files from hdfs to the local file system.

Usage: hdfs dfs -go <src> <localdst>

Control: hdfs dfs –become /user/test /dwelling house/edureka

Note: The command copyToLocal is like to get command, except that the destination is restricted to a local file reference.

-

count

HDFS Control to count the number of directories, files, and bytes under the paths that match the specified file pattern.

Usage: hdfsdfs -count <path>

Command: hdfs dfs –count /user

-

rm

HDFS Command to remove the file from HDFS.

Usage: hdfs dfs –rm <path>

Command: hdfs dfs –rm /new_edureka/test

-

rm -r

HDFS Command to remove the entire directory and all of its content from HDFS.

Usage: hdfs dfs -rm -r <path>

Command: hdfs dfs -rm -r /new_edureka

-

cp

HDFS Command to copy files from source to destination. This command allows multiple sources likewise, in which instance the destination must be a directory.

Usage: hdfs dfs -cp <src> <dest>

Command: hdfs dfs -cp /user/hadoop/file1 /user/hadoop/file2

Command: hdfs dfs -cp /user/hadoop/file1 /user/hadoop/file2 /user/hadoop/dir

-

mv

HDFS Control to motion files from source to destination. This command allows multiple sources every bit well, in which example the destination needs to be a directory.

Usage: hdfs dfs -mv <src> <dest>

Command: hdfs dfs -mv /user/hadoop/file1 /user/hadoop/file2

-

expunge

HDFS Control that makes the trash empty.

Control: hdfs dfs -expunge

-

rmdir

HDFS Command to remove the directory.

Usage: hdfs dfs -rmdir <path>

Command: hdfs dfs –rmdir /user/hadoop

-

usage

HDFS Control that returns the aid for an individual command.

Usage: hdfs dfs -usage <command>

Control: hdfs dfs -usage mkdir

Note: By using usage command you can get information about any command.

-

help

HDFS Command that displays help for given command or all commands if none is specified.

Command: hdfs dfs -help

This is the end of the HDFS Commands web log, I promise it was informative and you were able to execute all the commands. For more than HDFS Commands, you may refer Apache Hadoop documentation hither.

At present that you have executed the in a higher place HDFS commands, check out the Hadoop training by Edureka, a trusted online learning company with a network of more than 250,000 satisfied learners spread across the globe. The Edureka Big Data Hadoop Certification Training course helps learners become expert in HDFS, Yarn, MapReduce, Pig, Hive, HBase, Oozie, Flume and Sqoop using real-time use cases on Retail, Social Media, Aviation, Tourism, Finance domain.

Got a question for u.s.a.? Delight mention it in the comments section and we volition become back to y'all.

How To Import Data From Sql Into Hdfs Using Shell Script,

Source: https://www.edureka.co/blog/hdfs-commands-hadoop-shell-command

Posted by: guitierrezbessithomfor.blogspot.com

0 Response to "How To Import Data From Sql Into Hdfs Using Shell Script"

Post a Comment